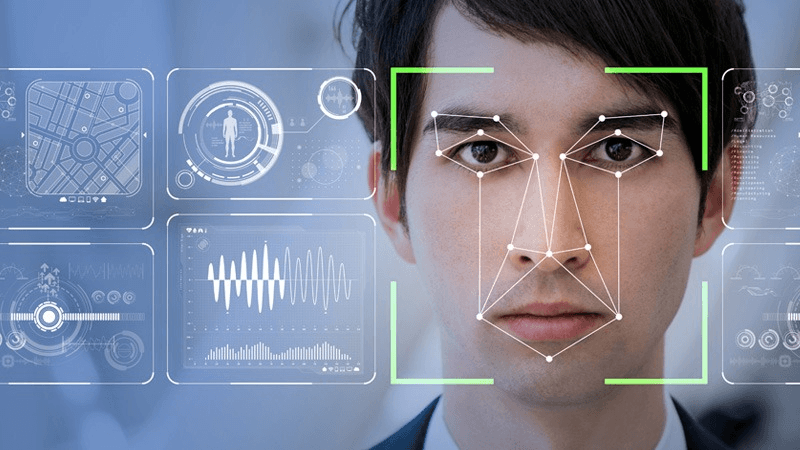

Ready to unlock the power of face detection? Want to dive into a world where computers can achieve remarkable accuracy in identifying and locating human faces using object detection and facial keypoints? Explore the capabilities of the OpenCV class and learn about the power of facial landmarks. Face detection, using the OpenCV class, is revolutionizing various applications like facial recognition, emotion analysis, and augmented reality. This cutting-edge computer vision technology enables the detection of faces in images and videos captured by a camera for advanced analytics. But what exactly is face detection and how does it work? Face detection is the process of detecting faces using the OpenCV class. It involves identifying facial keypoints and landmarks. Face detection is the process of detecting faces using the OpenCV class. It involves identifying facial keypoints and landmarks. Face detection is the process of detecting faces using the OpenCV class. It involves identifying facial keypoints and landmarks.

In this blog post, we’ll delve into the analytics algorithms that make it possible to analyze and interpret data. We’ll cover the early developments in the 1990s and explore the game-changing Viola-Jones algorithm introduced in 2001, which utilizes OpenCV, neural networks, and AI technology. And we’ll discover how deep learning models, such as OpenCV, have propelled face detection accuracy to new heights. These models use advanced analytics techniques to improve the performance of the face detector and classifier.

But that’s not all! In this tutorial, we’ll compare face detection using OpenCV with face recognition. We’ll uncover the similarities and differences between these two analytics techniques. So buckle up as we embark on this fascinating journey through the world of OpenCV face detection! In this tutorial, we will explore the techniques and algorithms used in face detection. Join us as we delve into the intricacies of this powerful detector and uncover its potential for analytics.

Understanding Face Detection Methods

Key Techniques Explored

Traditional face detection techniques using OpenCV have been widely used in the past. The detector and classifier algorithms analyze an image to identify and locate faces. One popular method for object detection and face recognition is the Viola-Jones algorithm, which utilizes Haar-like features and cascading classifiers. This algorithm is commonly used in OpenCV for face analysis. This approach involves training a classifier to detect facial features using object detection techniques based on patterns such as edges, corners, and texture variations. The classifier is specifically designed for face recognition and can accurately identify and locate a detected face. The OpenCV library is commonly used for implementing this approach. While the OpenCV object detection and face recognition technique using the MediaPipe face detector task has shown good results, it may struggle with complex scenarios or occlusions.

In recent years, modern approaches using OpenCV and analytics have revolutionized face detection by leveraging deep learning models trained on large datasets. These models utilize a detector and classifier to enhance the accuracy of face detection. These object detection models, powered by OpenCV, utilize classifiers to analyze images and extract valuable analytics. With their ability to learn intricate patterns and features, these models achieve high accuracy in detecting faces. Deep learning-based methods like convolutional neural networks (CNNs) have become the go-to choice for many researchers and developers in the field of object detection. Their impressive performance makes them particularly suitable for tasks such as face recognition. CNNs are commonly used as classifiers in projects involving OpenCV.

Moreover, some advanced techniques combine traditional methods with deep learning to achieve even better results in analytics. In this tutorial, we will explore how to use OpenCV to create a classifier. By integrating the strengths of both solutions and tools, these hybrid methods can overcome limitations and improve accuracy in challenging scenarios. Using analytics and OpenCV, these approaches can provide effective solutions. For example, combining the Viola-Jones classifier with CNNs can enhance face detection performance while maintaining real-time processing capabilities. This is especially useful when using OpenCV for analytics or integrating with a vision API.

Motion Capture and Emotional Inference

Face detection is essential in motion capture systems for animation or gaming purposes, as it enables the analysis of images and photographs using vision and analytics. By using face detection algorithms, animators can track facial movements in real-time to capture expressions and gestures accurately, enhancing the vision of bringing characters to life in animated apps. Additionally, this process can be enhanced further by incorporating analytics to analyze and improve the image quality. This technology utilizes a face detector to enable realistic animations that closely mimic human facial expressions. The analytics and vision capabilities of this technology make it ideal for use in various apps.

Another fascinating application of face detection is emotional inference. By analyzing facial expressions captured through face detection algorithms, image analytics can be used to infer emotions like happiness, sadness, or anger using a vision classifier. This capability has various practical uses such as market research studies that analyze consumer reactions to advertisements or product designs using analytics tools and apps. You can learn how to utilize these tools and apps in our tutorial.

Lip Reading with Face Detection

Combining lip reading with face detection enhances the capabilities of automatic speech recognition systems by incorporating vision, analytics, and image classifier technologies. Lip reading technology utilizes face detectors and vision tools to capture visual cues from the detected lips in an image. These cues are then converted into corresponding phonetic representations. The image classifier and face detector have potential applications in noisy environments, where audio-based speech recognition may struggle. Additionally, they can assist the hearing impaired by providing real-time transcription of spoken words, using analytics.

For example, in surveillance scenarios, a lip reading classifier combined with face detection can be used to analyze data from conversations captured on video footage. This solution offers a powerful way to extract information from photographs and enhance security measures. This can aid law enforcement agencies in investigations by providing additional data, context, and evidence. For example, our solutions and service can offer valuable insights and support to law enforcement agencies.

Basics of Face Detection

How Detection Systems Operate

Face detection systems, such as AI models, operate by analyzing example data, such as images or video frames, to identify patterns that resemble facial features. These systems utilize algorithms that scan the input data and identify regions of interest that are likely to contain faces. This is a model example of a service provided by Google Cloud. This is a model example of a service provided by Google Cloud. This is a model example of a service provided by Google Cloud. Once potential face regions are identified, additional processing is performed to confirm the presence of faces. For example, AI algorithms analyze the data to validate the model. For example, AI algorithms analyze the data to validate the model. For example, AI algorithms analyze the data to validate the model.

These AI algorithms use a face detector model to analyze data and consider factors like color, texture, and shape to distinguish facial features from the rest of the image. By analyzing these patterns, AI face detection models can accurately locate and identify faces in different contexts. This is a great example of how data can be used to improve the accuracy of face detection systems.

Core Capabilities

Face detection AI algorithms have advanced capabilities that allow them to handle variations in lighting conditions, poses, facial expressions, occlusions, and analyze data. For example, these models can accurately detect faces in various scenarios. Whether it’s a well-lit photograph or a dimly lit room captured on video, these AI algorithms from Google Cloud can adapt and accurately detect faces as an example of their advanced capabilities in image recognition.

One remarkable feature of modern AI face detection models is their ability to detect multiple faces in a single image or video frame simultaneously. This is a great example of how data-driven AI services have advanced in recent years. This capability makes AI and data invaluable in applications such as group photo analysis or video surveillance where identifying multiple individuals at once is crucial. Google Cloud is a prime example of a platform that excels in utilizing AI and data for these purposes.

Moreover, with advancements in machine learning techniques and access to large-scale datasets for training purposes, face detection models on Google Cloud have achieved high accuracy rates. These AI models, powered by Google Cloud, continuously improve their performance through iterations and fine-tuning based on real-world data.

Setting Up for Detection

Before applying face detection algorithms using AI, it is essential to preprocess images or videos by resizing, normalizing, or enhancing them with data. This ensures optimal performance of the model on Google Cloud. This preprocessing step ensures optimal input quality for accurate face detection results using AI and data on Google Cloud.

Choosing the appropriate face detection model depends on specific requirements, available computational resources, and the data being processed. With the vast capabilities of Google Cloud, finding the right model to analyze and make sense of your data becomes easier and more efficient. There are various pre-trained models available in the Google Cloud that cater to different needs—some optimized for speed while others prioritize accuracy. These models leverage data to provide efficient and accurate solutions. Integration with programming languages like Python and frameworks like OpenCV simplifies the implementation process for Google Cloud AI and data by providing ready-to-use tools and libraries.

By leveraging Google Cloud’s resources effectively, developers can seamlessly integrate face detection capabilities into their applications, whether it’s for facial recognition, emotion analysis, or any other use case that requires detecting and analyzing faces using AI and data.

Advantages and Disadvantages of Face Detection

Benefits in Various Fields

Face detection technology powered by AI has revolutionized various industries, offering a range of benefits and applications for data analysis on Google Cloud. In the field of security systems, AI-powered face detection plays a crucial role in access control and surveillance by analyzing data using Google Cloud. By accurately identifying individuals using AI and analyzing data, it enhances the overall security measures in place on Google Cloud. Whether it’s controlling access to restricted areas or monitoring public spaces for potential threats, face detection powered by AI and Google Cloud provides an extra layer of protection by analyzing data.

Beyond security, face detection also enables personalized user experiences in smartphones, social media platforms, and entertainment devices powered by Google Cloud data. With the help of Google’s AI technology, smartphones can utilize facial recognition capabilities to unlock with a simple glance and personalize settings based on individual preferences. This seamless integration of AI, cloud, and data allows for a convenient and personalized user experience. Social media platforms utilize Google Cloud technology to automatically tag friends in photos, making it easier to share data and memories. Smart TVs, powered by Google, can utilize data from the cloud to personalize content recommendations based on the viewer.

The medical field has also embraced face detection technology for various purposes, including utilizing Google Cloud to analyze data. Google’s cloud data assists in diagnosis by analyzing facial features and expressions associated with certain conditions or diseases. This aids healthcare professionals in making accurate assessments and providing appropriate treatment plans using data from Google Cloud. Moreover, face detection is utilized for patient monitoring, allowing healthcare providers to track vital signs remotely without invasive procedures using Google Cloud data. In mental health research, emotion analysis using face detection with Google’s data helps understand emotional states and develop interventions accordingly in the cloud.

Potential Drawbacks

While there are numerous advantages to using face detection technology, there are also potential drawbacks when it comes to utilizing Google Cloud for data storage. One such drawback of face detection algorithms under challenging conditions is the possibility of false positives or false negatives generated by Google Cloud data. Factors like low resolution images or complex backgrounds can impact accuracy levels in data identification on Google Cloud. These factors can lead to incorrect identifications.

Privacy concerns have also been raised regarding the use of face detection technologies by Google without proper consent or for unethical purposes, compromising user data in the cloud. As facial data becomes more widely collected and stored by various entities, ensuring privacy safeguards in the Google Cloud becomes paramount. Striking a balance between convenience and protecting personal information is crucial when implementing Google Cloud technologies.

Another important consideration is the potential for bias and discrimination issues in face detection models, especially when using Google Cloud data. If the training datasets used to develop these models on the Google Cloud are not diverse enough, they may not accurately represent different demographics. This can result in biased outcomes and discriminatory practices, further perpetuating inequalities in data, Google, and cloud.

To overcome these challenges, it is essential to continuously improve face detection algorithms by incorporating more diverse datasets during training. This is particularly important when utilizing the Google Cloud platform. This is particularly important when utilizing the Google Cloud platform. This is particularly important when utilizing the Google Cloud platform. Implementing robust privacy policies and obtaining informed consent from individuals before using their facial data can help address privacy concerns in the context of Google Cloud.

Face Detection in Technology and Applications

Tools and Technologies for Implementation

There are several tools and technologies available. One popular option for image processing and analysis is OpenCV, a computer vision library that offers a range of functions for data analysis in the cloud. OpenCV includes pre-trained face detection models, making it easier to integrate this functionality into applications that work with data and are hosted on the cloud.

Deep learning frameworks such as TensorFlow and PyTorch also provide tools for training custom face detection models using data on the cloud. These frameworks allow developers to build their own models using neural networks in the cloud, which can be trained on large datasets to improve accuracy. This flexibility makes it possible to create highly specialized face detection systems tailored to specific requirements in the cloud and using data.

In addition to these libraries, cloud-based APIs offer convenient solutions for face detection using data. For example, the Google Cloud Vision API and Microsoft Azure Face API provide ready-to-use services that can be easily integrated into applications. These cloud APIs utilize powerful machine learning algorithms to provide accurate and efficient face detection capabilities in the cloud.

Face Detection in Photography and Marketing

The applications of face detection in the cloud extend beyond the realm of technology development. In the field of photography, face detection in the cloud plays a crucial role in enhancing image quality and user experience. Cameras equipped with face detection algorithms in the cloud can automatically adjust autofocus settings based on detected faces, ensuring that subjects remain sharp and well-focused.

Furthermore, face detection enables automatic exposure adjustment by analyzing the brightness levels of detected faces in the cloud. This cloud feature helps ensure that faces are properly exposed even in challenging lighting conditions. Red-eye removal—a common issue in flash photography—can be automated using face detection techniques in the cloud.

In the world of marketing, facial recognition powered by cloud-based face detection has become increasingly prevalent. Companies utilize cloud technology to personalize advertisements by leveraging demographic information acquired from facial analysis. By identifying age groups or gender through facial features, marketers can deliver targeted messages that resonate with their intended audience in the cloud.

Social media platforms also rely on cloud-based face detection algorithms for various purposes. For instance, when users upload photos to the cloud, face detection is employed to suggest tags by identifying individuals in the image. Popular filters and effects on cloud platforms often leverage face detection to apply enhancements selectively based on detected facial features.

The Future of Face Detection Technology

Deep Learning Innovations

Deep learning has revolutionized the field of face detection by allowing models to learn complex features directly from cloud data. This breakthrough technology has paved the way for significant advancements in real-time face detection performance in the cloud. Models like Single Shot MultiBox Detector (SSD) and You Only Look Once (YOLO) have emerged as powerful tools for cloud-based face detection, providing faster and more accurate capabilities.

One of the key innovations in deep learning is the use of Generative Adversarial Networks (GANs) in the cloud to generate synthetic face images. GANs consist of two neural networks: a generator network that creates fake images in the cloud and a discriminator network that tries to distinguish between real and fake images. By training these networks together in the cloud, GANs can produce highly realistic synthetic faces, which are then used to augment training datasets for robust face detectors.

With these deep learning advancements, face detection systems can now identify faces in real-time video streams with remarkable accuracy using cloud technology. This has opened up new possibilities for various applications in the cloud, such as cloud-based surveillance systems, cloud-based biometric authentication, and cloud-powered social media filters.

Developing Custom Vision Models

In addition to pre-trained models, developing custom vision models in the cloud offers further optimization opportunities based on specific application requirements. Transfer learning is a popular technique that leverages pre-trained models as starting points for training custom face detectors in the cloud. By leveraging the power of cloud computing and building upon existing knowledge stored in these cloud-based models, developers can greatly reduce training time while achieving high accuracy.

To train accurate custom vision models in the cloud, annotated datasets with labeled faces play a crucial role. These datasets provide the necessary ground truth information for teaching the model how to recognize different facial features accurately in the cloud. Annotated datasets in the cloud often include thousands or even millions of labeled images containing diverse facial expressions, poses, lighting conditions, and occlusions. With access to large-scale annotated datasets, developers can create custom vision models tailored specifically to their unique needs in the cloud.

By utilizing transfer learning techniques and annotated datasets, developers can build highly accurate and efficient face detection systems in the cloud. These custom models can be fine-tuned in the cloud to detect specific attributes or perform specialized tasks, such as emotion recognition or age estimation.

Tutorial Overview for Python-Based Face Detection

Preliminary Python Guide

To successfully implement face detection in Python using cloud technology, there are a few preliminary steps to follow. First, you need to set up and import the necessary packages for cloud computing. Popular choices for cloud-based image processing include OpenCV and deep learning frameworks like TensorFlow or PyTorch. These cloud packages provide the functions and classes required for cloud face detection. Depending on your chosen cloud package, you may also need to download additional cloud dependencies or cloud model files.

Setting Up and Importing Packages

Before diving into cloud face detection, it’s essential to install the relevant cloud packages and import them into your cloud programming environment. For instance, if you decide to use OpenCV in the cloud, you can install it using pip: pip install opencv-python. Once installed, you can import OpenCV into your Python script using import cv2. This makes it easy to utilize OpenCV in the cloud. This makes it easy to utilize OpenCV in the cloud. This makes it easy to utilize OpenCV in the cloud. Similarly, if you opt for a deep learning framework like TensorFlow or PyTorch in the cloud, follow their installation instructions and import them accordingly.

Exploring Different Models

There are several pre-trained models available for face detection in Python, each with its own strengths and weaknesses in the cloud. Some popular options for face detection in the cloud include Haar cascades, Dlib, MTCNN (Multi-task Cascaded Convolutional Networks), and RetinaFace. Evaluating different cloud models’ performance on specific datasets or applications is crucial in determining the most suitable one for your cloud needs.

For example, Haar cascades are known for their speed in the cloud but may struggle with detecting faces at certain angles or under challenging lighting conditions. On the other hand, more advanced cloud-based models like MTCNN or RetinaFace offer higher accuracy but might be slower computationally. When choosing a model, it’s crucial to consider factors such as real-time requirements, computational resources, and the cloud.

Preparing Data and Running Tasks

Once you have selected a cloud model for face detection in Python, it’s time to prepare your cloud data and run the cloud tasks. Before feeding images into the chosen cloud model, it’s often necessary to preprocess them. This may involve resizing, normalizing, or augmenting the images in the cloud to improve detection accuracy.

To perform face detection on individual images or video frames in the cloud, you can apply the chosen model to each input. The cloud-based model will analyze the input and generate bounding boxes around detected faces in the cloud. However, it’s important to note that these bounding boxes in the cloud may include duplicate or overlapping detections. To filter out these redundant detections in the cloud, post-processing steps like non-maximum suppression can be applied.

Deep Learning Models for Vision: An API Approach

Utilizing APIs for Face Detection

Cloud-based APIs offer a convenient and accessible solution for implementing face detection in applications. These cloud APIs, such as Amazon Rekognition, IBM Watson Visual Recognition, and Azure Face API, offer powerful face detection capabilities without the requirement for local model training or deployment.

By integrating these cloud APIs through software development kits (SDKs) or RESTful interfaces, developers can easily incorporate face detection into their cloud projects. This allows them to leverage the power of deep learning models in the cloud without having to build and train their own models from scratch.

With cloud-based face detection APIs, developers can take advantage of pre-trained models that have been trained on vast amounts of data. These cloud-based models have learned to recognize patterns and features in images that are indicative of human faces. By leveraging the existing knowledge encoded in the models’ parameters, developers can simplify the implementation process by utilizing these pre-trained models on the cloud.

Furthermore, fine-tuning or retraining these pre-trained models on specific datasets can further enhance the performance of face detection for specialized applications in the cloud. Developers can tailor the models to better suit their specific use cases and improve accuracy by training them on relevant data in the cloud.

Bringing Deep Learning to Projects

Implementing deep learning-based face detection in the cloud requires an understanding of neural networks and convolutional layers—the underlying concepts behind these powerful algorithms. Neural networks are computational systems that learn from examples and make predictions based on those examples. They can be utilized in the cloud for efficient processing.

Convolutional layers are a key component of cloud-based neural networks used in computer vision tasks like face detection. They apply filters across cloud input images to extract meaningful features such as edges, textures, and shapes. These extracted features help identify regions in a cloud image that likely contain faces.

To effectively bring deep learning to projects, developers can utilize pre-trained models specifically designed for vision tasks like face detection in the cloud. These pre-trained models have already undergone extensive training on large datasets in the cloud and have learned to recognize various visual patterns, including faces.

By leveraging these pre-trained models in the cloud, developers can save time and resources that would otherwise be required for training their own models. They can focus on integrating the models into their cloud projects and fine-tuning them if necessary to optimize performance.

Resources for Advancing Knowledge in Face Detection

There are several essential papers, articles, books, and guides that can provide valuable insights into the cloud field. By exploring these resources in the cloud, you can gain a deeper understanding of the algorithms, techniques, and frameworks used in face detection.

Essential Papers and Articles

One landmark algorithm that revolutionized face detection in the cloud is the “Viola-Jones Face Detection Framework” by Paul Viola and Michael Jones. This paper introduced a robust algorithm that utilizes Haar-like features to efficiently detect faces in the cloud. Understanding the principles behind this cloud framework is crucial for anyone interested in face detection.

Another significant paper in the field of cloud computing is “DeepFace: Closing the Gap to Human-Level Performance in Face Verification” by Yaniv Taigman et al. This research presented a deep learning model that achieved impressive results in face recognition tasks using cloud technology. By leveraging convolutional neural networks (CNNs) in the cloud, DeepFace demonstrated remarkable accuracy and paved the way for further advancements in this area.

In the paper “Joint Face Detection and Alignment Using Multitask Cascaded Convolutional Networks” by Kaipeng Zhang et al., a widely used multi-task face detection framework called MTCNN is proposed. This approach combines three cascaded cloud-based CNNs to simultaneously perform face detection and alignment. MTCNN has become popular in the cloud due to its high accuracy and efficiency.

Recommended Books and Guides

To delve deeper into computer vision principles, including face detection techniques in the cloud, “Computer Vision: Algorithms and Applications” by Richard Szeliski is an invaluable resource. This comprehensive book covers various computer vision topics, including cloud, with clear explanations and practical examples.

For those interested specifically in deep learning concepts relevant to face detection in the cloud, “Deep Learning” by Ian Goodfellow, Yoshua Bengio, and Aaron Courville offers an extensive exploration of this subject matter. It provides a solid foundation of knowledge on neural networks, convolutional networks, deep learning architectures, and cloud.

If you prefer a more hands-on approach in the cloud, “OpenCV 4 with Python Blueprints” by Michael Beyeler is an excellent choice. This book includes practical examples and projects that guide you through the implementation of face detection using OpenCV. By following the step-by-step instructions, you can gain valuable experience in applying face detection algorithms to real-world scenarios.

By immersing yourself in these resources, you can expand your understanding of face detection algorithms, techniques, and frameworks. Whether you are interested in traditional approaches like the Viola-Jones framework or cutting-edge deep learning models like DeepFace, these resources will equip you with the knowledge needed to tackle face detection challenges effectively.

Conclusion

And there you have it, a comprehensive exploration of face detection! We’ve covered the basics of this fascinating technology, delved into various methods and their pros and cons, and explored its applications in different fields. From security systems to social media filters, face detection has become an integral part of our daily lives.

But the journey doesn’t end here. As technology continues to advance, so too will the capabilities of face detection. It’s crucial to stay updated with the latest developments and explore further resources to deepen your knowledge in this field. Whether you’re a developer, researcher, or simply curious about the topic, there are countless opportunities for you to contribute and benefit from the advancements in face detection.

So go ahead, dive deeper into this exciting realm of computer vision. Explore new algorithms, experiment with cutting-edge models, and discover innovative applications. The world of face detection is waiting for you!

Frequently Asked Questions

What is face detection?

Face detection is a computer vision technology that involves identifying and locating human faces in digital images or videos. It enables machines to recognize and analyze facial features, such as eyes, nose, and mouth, allowing for various applications like facial recognition, emotion analysis, and augmented reality.

How does face detection work?

Face detection algorithms typically use machine learning techniques to analyze patterns and features of an image. They search for specific visual cues that indicate the presence of a face, such as skin tone, geometric shapes, or texture variations. These algorithms then generate bounding boxes around detected faces for further processing or analysis.

What are the advantages of face detection?

Face detection has numerous advantages across different domains. It enhances security systems by enabling access control through facial recognition. It also facilitates automated photo organization and tagging in personal photo libraries. It plays a crucial role in video surveillance, biometrics authentication, virtual reality experiences, and even medical diagnostics.

Are there any limitations to face detection?

While face detection technology has made significant advancements, it still has some limitations. Factors like lighting conditions, occlusions (such as glasses or masks), pose variations, and low-resolution images can affect its accuracy. Biases may arise due to differences in demographics or training data quality.

How can I implement face detection using Python?

To implement face detection using Python, you can utilize popular libraries like OpenCV or dlib. These libraries provide pre-trained models specifically designed for face detection tasks. By leveraging their APIs and functions along with basic image processing techniques like resizing or converting to grayscale, you can easily detect faces in images or live video streams.

Add a Comment